In this piece

Are news outlets viewed in the same way by experts and the public? A comparison across 23 European countries

French Economy and Finance Minister Bruno Le Maire is seen on a TV screen during a television interview at the Bercy Finance Ministry, following the outbreak of the coronavirus disease (COVID-19) in Paris, France, April 24, 2020. REUTERS/Benoit Tessier

In this piece

Key Findings ↑ | Introduction↑ | Data↑ | Results↑ | Discussions and limitations↑ | Footnotes | References ↑ | Acknowledgements ↑ | About the Authors ↑ | Methodological appendix↑DOI: 10.60625/risj-nbg4-8b23

Key Findings ↑

In this Reuters Institute's factsheet, we compare expert evaluations of news outlets’ accuracy from the 2017 European Media Systems Survey (Popescu et al. forthcoming) with the Digital News Report 2018 audience brand trust scores (Newman et al. 2018). Our analysis shows that expert assessments of accuracy and public trust ratings for the same outlets are strongly aligned. Specifically, we find:

- A strong positive correlation (r = .78, p < .001, N = 226) at the outlet level between public trust and accuracy assessments by experts. In other words, outlets that have higher accuracy ratings from experts tend to have higher trust ratings from the public. Outlets deemed less accurate by experts tend to be less trusted by the public.

- On average, both experts and the public rate news from public service media outlets as the most accurate and the most trustworthy, respectively. News from digital-born brands is on average rated lower by both. Commercial TV and newspapers sit between the two for both experts and the public.

- Although public trust and expert ratings are broadly consistent, relative to what we would expect based on expert opinion of accuracy, TV outlets (especially commercial TV) appear to be slightly more trusted by the public, whereas newspapers and digital-born outlets appear to be slightly less trusted.

Introduction ↑

Trust in the news is now a key concern for news organisations, journalists, academics, and policy-makers alike. Against the backdrop of massive growth in the number of sources to choose from, the widespread use of search engines, social media, and messaging apps to arrive at news, and renewed concerns over misinformation, a key question emerges: are news outlets viewed in the same way by experts and the public?

This question is intellectually interesting, but it is also of practical importance. Journalists and the news media are increasingly focused on understanding and addressing issues of trust, for example through efforts like the Journalism Trust Initiative and the Trust Project, and some algorithmically driven services – including Facebook’s news feed1 – are, in some countries at least, partially reliant on aggregate trust ratings from their users for making automated decisions about what content to prioritise on people’s news feeds.

In this factsheet we compare the level of trust people have in 226 individual news outlets across 23 European countries (24 markets) with assessments of the accuracy of the same outlets from experts, and explore how this varies across different types of outlets (e.g. newspapers, television, and online-only).2 Of course, neither of these necessarily reflect how accurate or trustworthy certain news sources actually are. The purpose here is not to make judgements about individual news outlets, but to find out whether the views of experts and the public are similar or different.

Data ↑

Our starting point in this factsheet is whether news outlets are viewed in the same way by experts and the public. To begin to answer this, we used the 2018 Digital News Report (DNR) survey on the level of trust people have in news from different outlets. Specifically, respondents from nationally representative samples were asked to rate outlets on a 0 to 10 scale, where 0 referred to ‘not at all trustworthy’ and 10 to ‘completely trustworthy’. These were published as average (mean) outlet trust scores.

Here, we compare these scores to data from the 2017 European Media Systems Survey (EMSS). In this survey, media experts in each country were asked ‘to what extent do these media provide accurate information on facts backed by credible sources and expertise?’ for a series of news outlets, and asked to score them on a scale ranging from 0 ‘never’ to 10 ‘always’. Again, these were converted into average (mean) accuracy ratings per outlet.

Both surveys were fielded in several countries, producing overlapping data for 23 European countries (24 markets) and 226 outlets. The DNR and EMSS projects exist separately from one another, so what we present here is secondary analysis (see the methodological appendix for a fuller description of the methods and sample).

The countries included in both surveys are spread across geographic regions and media systems:

- Northern Europe: Denmark, Finland, Norway, Sweden.

- Central and Western Europe: Austria, Belgium (Flemish- and French-speaking), Germany, Ireland, Netherlands, Switzerland (German only), UK.

- Southern Europe: France, Greece, Spain, Italy, Portugal.

- Eastern Europe: Bulgaria, Croatia, Czech Republic, Hungary, Poland, Romania, Slovakia.

Results ↑

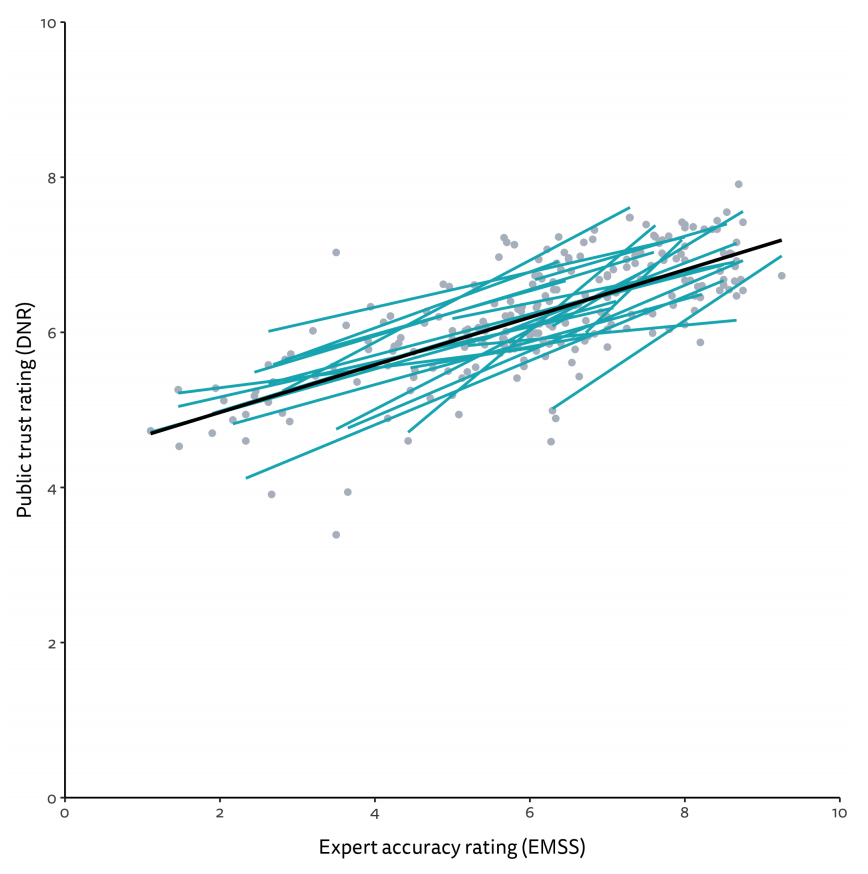

To start, we can get a sense of the overall similarity of the public and expert scores for these 226 news outlets by simply plotting audience trust against the expert ratings for all countries (see Figure 1). This already suggests a strong positive correlation between the two. The trend line suggests that the more accurate experts rate a news outlet on average, the higher the average public trust in it.

Figure 1. Correlation between public trust and expert accuracy ratings

We can express this numerically by performing a correlation test. As we have several countries in our sample it makes sense to use a multilevel correlation test to account for the fact that the data are clustered within countries. The result of a multilevel Pearson correlation test indicated a strong, statistically significant, positive correlation between expert accuracy assessments and public trust ratings for news outlets across 24 markets in Europe (r = .78, p < .001).3 In other words, outlets that have higher accuracy ratings from experts tend to have higher trust ratings from the public. Outlets deemed less accurate by experts tend to be less trusted by the public.

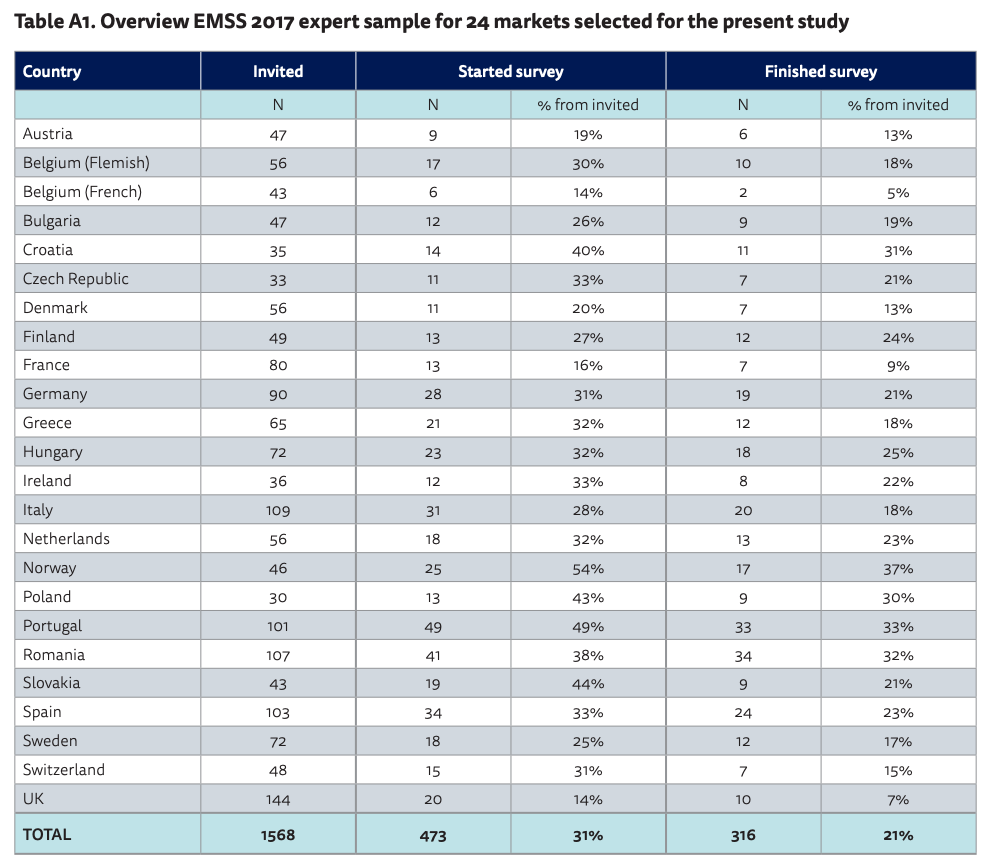

Looking at one correlation across several countries might conceal national differences, meaning that there could be a negative correlation in some countries. In order to be sure that we do not overlook any such differences we plotted separate lines for each of the countries in our sample in Figure 2. Each turquoise line now represents the correlation between expert and public news ratings within a single country. The fact that they all point in the same upwards direction as the overall correlation suggests that expert and audience news brand assessments also correlate positively within countries.

Figure 2. Correlation between public trust and expert accuracy ratings by country

We then performed more fine-grained analyses by separating news outlets by their type. This could be revealing because it is possible that, while some outlet types might be seen in the same way by experts and the public, for others, opinion might diverge. To explore this, we group our outlets into four types: news from public service media (PSM), newspapers, commercial TV news, and digital-born news outlets.

When we look at average trust and accuracy ratings by outlet type (Figure 3), we mostly see similarities between the views of the public and experts. Both groups rate news from public service media highest, and news from digital-born outlets lowest. On average, the public score public service media outlets 6.76 on the 0–10 trust scale, and digital-born outlets 5.83. Similarly, experts score public service media 7.12 for accuracy, and digital-born outlets 5.25.

However, newspapers and commercial TV news outlets rank slightly differently in each of the two groups. On average, experts rate newspapers (6.15) higher than commercial TV news (5.71) for accuracy, but when it comes to public trust, on average newspapers (6.14) and commercial TV (6.27) are about equal.

Figure 3. Average public trust and expert accuracy scores by outlet type

For the final analysis we move back to the scatterplot that we have already seen in Figure 1. Figure 4 shows the same data but this time different outlet types are highlighted in each of the four panels. We see, once more, that outlets that have higher accuracy ratings from experts tend to have higher trust ratings from the public, even when we look at each outlet type separately. We also see that most public service media outlets sit in the top-right part of the chart, indicating again that these outlets are rated highly by both experts and the public. Conversely, few digital-born outlets are rated highly by the public and experts, and are typically clustered around the middle of the chart. Both newspapers and commercial TV outlets are spread across the chart, indicating the diversity that exists within both of these types. As categories, newspapers include both tabloids and broadsheets, and commercial TV outlets vary both in terms of their obligations around news and their investment in news programming.

Figure 4. Correlation between public trust and expert accuracy assessments by outlet type

In Figure 4, we also show the trend line for all outlets that we have already seen in Figure 1. Deviation above and below this trend line can indicate differences of opinion between the public and the experts. Outlets that sit above the trend line are more trusted by the public relative to expert opinion on accuracy and outlets that sit below the trend line are less trusted. There are no strong deviations for any of the four types of news outlet. But looking more closely one could argue that the public has slightly higher levels of trust in television news – especially commercial TV – than what we would expect from expert accuracy ratings, because it tends to sit above the overall trendline. At the same time, newspaper and digital-born outlets tend to sit just below the trendline, possibly indicating that these outlets are less trusted by the public than we might expect. These slight differences fit with our general understanding of television as a mass medium, and newspapers (at least in some countries) as increasingly serving a highly interested, highly educated audience.

Discussions and limitations ↑

Our analysis clearly shows that the public generally has high trust in news outlets that experts believe ‘provide accurate information on facts backed by credible sources and expertise’ – and that this is consistent for 226 news outlets across 24 European news markets. This is also true for different news outlet types, where both groups rate news from public service media as most trusted/accurate, and news from digital-born outlets less so. If there are any differences, we can perhaps see that the public seem to rate newspapers and digital-born news outlets slightly lower relative to expert opinion. In contrast, the public seem to have higher trust in television, especially commercial TV.

Of course, these conclusions are entirely dependent on the data we analysed. It is important to keep in mind that there is no universal consensus on the accuracy of different news outlets in different countries. Experts can disagree on this, and it is always possible that expert evaluations do not necessarily reflect the underlying reality. Although the EMSS team have taken great care in selecting their experts (they built up their panel from previous rounds and have learned, for example, to shorten the questionnaire based on previous experience) expert surveys come with limitations, just like any other method used in social science. Just like the public, experts sometimes respond to partisan cues, or are forced to rely on short-cuts and assumptions in the absence of direct experience of journalistic practices within specific newsrooms. Indeed, experts and large parts of the public may draw on many of the same assumptions, which could explain the similarities in their views.

At the same time, online surveys of public opinion can be subject to a range of different biases. Survey respondents always have a choice of whether or not to take part, and those that do are often slightly different from the general population. This may mean that samples are slightly more knowledgeable about journalism and the media, and therefore more closely aligned with experts.

We should also keep in mind that the correlation we found was high but not perfect. The fact that people rate some outlets as more trusted on average does not mean that there is universal agreement. Some people do indeed have low trust in outlets that experts deem accurate, and vice versa.

More generally, we know that trust is far from synonymous with use or exposure. People often use news from sources they say they do not trust (Tsfati and Cappella, 2003). But by the same token, people do not trust everything they see, and are generally sceptical about news and information they see online (Fletcher and Nielsen, 2019). Our findings suggest that we should be less pessimistic about the public’s ability to navigate high-choice news environments, and caution against using exposure to information as a proxy for belief in it.

Footnotes

1 For more information on Facebook’s approach to selecting news sources for their news feed see https://about.fb.com/news/2018/01/ trusted-sources/

2 More accurately, we analyse data from 24 European markets, as we analyse separate data for Flanders (Flemish-speaking) and Wallonia (French-speaking) in Belgium. These regions are generally considered to be home to two separate media systems.

3 We ran this analysis using the R package ‘correlation’ (Makowski et al. 2020).

References ↑

- Fletcher, R., Nielsen, R. K. 2019. ‘Generalised Scepticism: How People Navigate News on Social Media’, Information, Communication and Society 22(12), 1751–69.

- Makowski, D., Ben-Shachar, M., Patil, I., Lüdecke, D. 2020. ‘Methods for Correlation Analysis’, CRAN. https://github.com/easystats/correlation

- Newman, N., Fletcher, R., Kalogeropoulos, A., Levy, D. A. L., Nielsen, R. K. 2018. Reuters Institute Digital News Report 2018. Oxford: Reuters Institute for the Study of Journalism. www.digitalnewsreport.org

- Popescu, M, Tóka, G., Santana Pereira J., Gosselin T. (forthcoming). European Media Systems Survey 2017. Data Set. Bucharest: Median Research Centre. www.mediasystemsineurope.org

- Tsfati, Y., Cappella, J. N. 2003. ‘Do People Watch What they do Not Trust? Exploring the Association between News Media Skepticism and Exposure’, Communication Research 30(5), 504–29.

Acknowledgements ↑

We would like to thank the research team at the Reuters Institute for their valuable feedback, as well as everyone involved in the European Media Systems Survey who have helped by gathering and providing data.

About the Authors ↑

Anne Schulz is a Research Fellow at the Reuters Institute for the Study of Journalism at the University of Oxford.

Richard Fletcher is Senior Research Fellow at the Reuters Institute for the Study of Journalism at the University of Oxford and leads the Institute’s research team.

Marina Popescu is the Director of the Median Research Centre, Bucharest.

Methodological appendix ↑

For the analysis presented in this factsheet we made use of two survey data sets: the 2017 European Media Systems Survey (EMSS) and the 2018 Reuters Institute Digital News Report survey (DNR).

EMSS

The EMSS is an expert survey that was conducted for the first time in 2010 and repeated in 2013 and 2017 with support of the EEA Research Programme (Grant 11 SEE/30.06.2014). Part of the survey asks experts familiar with particular media systems to rate different news outlets on different criteria. News outlets are rated on different dimensions that can be indicative of the quality of reporting such as accuracy, depth, contextualisation, or political partisanship.

Here we used data from the 2017 wave for which experts in 35 European media systems were interviewed using a standardised online questionnaire. People were considered experts on media systems if they were working in a profession that requires extensive knowledge of the media landscape and of mediated social and political phenomena. Accordingly, experts from academic institutions in political science, communication, media studies, journalism, European studies, sociology, and, as much as possible, non-academic specialists in media monitoring, media economics analysis, media consultancy, or media/journalism training were invited.

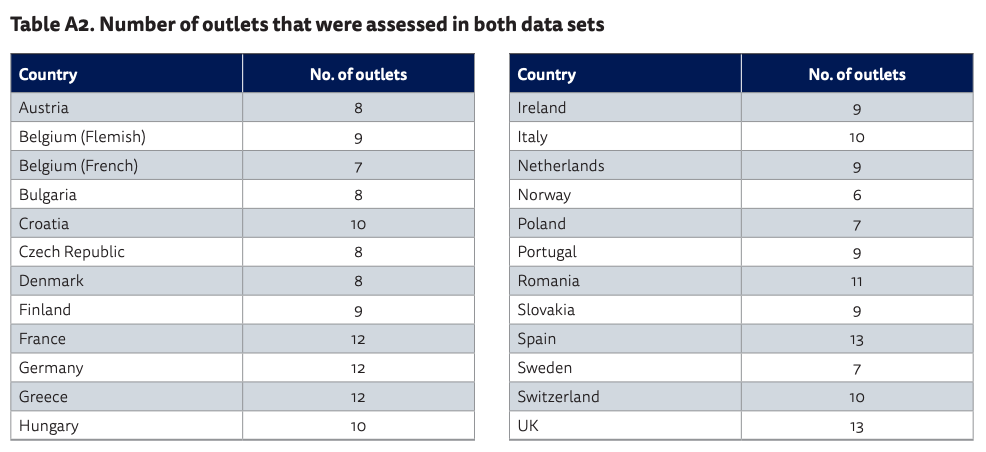

Respondents were invited by personal emails for the first time in March 2017 and last reminders were sent out in mid-June of the same year. The questionnaire was set up online and the EMSS team reports varying but overall reasonable response rates per country (see Table A1).

Table A1. Overview EMSS 2017 expert sample for 24 markets selected for the present study

The question on the accuracy of specific news outlets was worded as follows: ‘To what extent do these media provide accurate information on facts backed by credible sources and expertise?’

Responses were provided on a 0 to 10 scale, with 0 representing ‘never’ and 10 representing ‘always’.

Separate questions were used to ask experts about other dimensions, such as depth, contextualisation, or political partisanship. Some of these could be compared to public trust. However, we felt that the accuracy measure offered the most meaningful comparison.

We should note that expert surveys come with just as many caveats as any other research method. This starts with the procedures by which experts are selected, how they are contacted, and who responds. For example, experts might have certain political views which influence how they judge certain news outlets. However, in the absence of a universal consensus on the accuracy of individual news outlets, we believe this is a reasonable substitute.

For more information, see: http://www.mediasystemsineurope.org

DNR

The DNR is based on an online survey of news audiences commissioned by the Reuters Institute for the Study of Journalism. It started in 2012 and has since been repeated annually across an increasing number of media markets. We used the 2018 data set because the data collection was the closest in time to the EMSS data with the relevant outlet trust scores.

The 2018 DNR survey covered 37 markets. In each, a nationally representative sample of around 2,000 people was surveyed and asked about their news consumption and attitudes towards different news media. The survey was fielded at the end of January/beginning of February 2018 by YouGov (and their partners) using an online questionnaire.

In each country, the respondents were asked to rate the trustworthiness of 12–16 outlets. The question was worded as follows: ‘How trustworthy would you say news from the following brands is? Please use the scale below, where 0 is “not at all trustworthy” and 10 is “completely trustworthy”.’

Responses were provided on a 0 to 10 scale, with an option for ‘Haven’t heard of this brand’. These responses were excluded from the mean trust scores.

For more information, see: http://www.digitalnewsreport.org

Combining Both Data Sets

Both surveys cover a range of different news outlets. In general, these outlets are the most prominent or the most widely used within each market. However, selection was approached slightly differently in the EMSS compared with the DNR. For the EMSS, experts were often asked to assess different news programmes, or asked separately about the online and offline news services from the brand. For example, in the UK, EMSS collected separate ratings for BBC One, BBC Two, and bbc.co.uk. In contrast to this, the DNR asked about trust in BBC News as a whole. Whenever this was found to be the case, we averaged (mean) the EMSS expert assessments for news services belonging to the same brand. This average was then matched to the DNR brand trust data.

Across the 24 markets covered in both data sets, 226 news outlets could be matched. The number of outlets we were able to match differs per country – ranging from six in Norway to 13 in Spain and the UK. Table A2 presents the count of news outlets per country for which we found expert and audience quality assessments.

Table A2. Number of outlets that were assessed in both data sets

We further coded each outlet for its type to compare alignment of expert and public assessments across different news types. 115 outlets in the data are newspapers (either online or offline), 54 outlets are commercial TV news, 28 are public service news, and 29 are digital-born news outlets.

Published by the Reuters Institute for the Study of Journalism with the support of the Google News Initiative.

This report can be reproduced under the Creative Commons licence CC BY. For more information please go to this link.