A viral profile made him a lightning rod for journalism’s AI anxieties. He sees himself as a trailblazer

An illustration created with Google's AI tool Nano Banana, featuring a busy newsroom where every reporter, anchor, and radio host resembles Fortune's journalist Nick Lichtenberg.

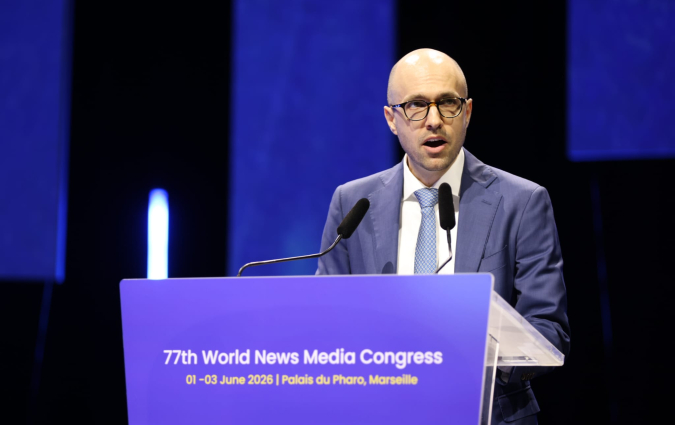

As more journalists share how they use Generative AI in their work, AI-generated writing remains somewhat taboo. Nobody knows this better than Nick Lichtenberg, whose picture reclining back in a chair, feet up on his desk, blue eyes looking at the camera, was inescapable in the areas of social media inhabited by journalists over the past couple of weeks.

Lichtenberg had been profiled in a Wall Street Journal piece by Isabella Simonetti for his use of GenAI in writing as an editor at American business magazine Fortune. Since the article was published on 26 March, Lichtenberg has been the subject of many social media posts, Substack reads, and podcasts. He even appeared on the BBC Media Show and has faced strong backlash, with some journalists expressing anger or dismay at what they see as the media industry taking a wrong turn.

We had the chance to speak to Lichtenberg about how he’s experiencing his sudden notoriety, as well as how he views the role of AI in the future of journalism. Our conversation has been edited for length and clarity.

Felix Simon: I was curious about how the Wall Street Journal story came about.

Nick Lichtenberg: I think it’s because Semafor reported on me soon after I was hired. I got a reach out from Isabella [Simonetti, the author of the WSJ piece], many months ago, and so it was good old-fashioned shoe leather journalism. She cultivated me as a source over a long time, and here we are.

Marina Adami: When you spoke to her, were you expecting this backlash?

Lichtenberg: I haven’t really engaged with the backlash. I don’t think that would be a healthy thing for me to do. So I know it’s out there, but I haven’t paid close attention to it. Some of it is slipping through to me. I don’t think I quite expected it, because from my perspective, I'm using a tool to do my job. It’s really as simple as that. I know it’s a loaded issue, and I knew that when I sat down for the profile, but it was still a bit unexpected.

Adami: What’s been your personal experience of this reaction?

Lichtenberg: It’s crazy. I was called the ‘Lichtenberg era of journalism' on a Puck podcast. I’m trying to have a bit of disengagement from it. I’m trying to look at it as if it’s happening to someone else, and not take it too personally or seriously, although it’s a very serious thing. It’s healthy to think about this as a moment in time and something that is provoking strong reactions right now. We will all collectively look back on it someday with a different perspective.

Adami: Had you already faced strong reactions to your work with AI in your newsroom?

Lichtenberg: Yeah, that’s fair to say. I would focus more on my friend groups: right now, I’m having an argument with three of my closest friends, and they are all at different points on the spectrum of how they feel about this issue. And this goes back before I sat down for the profile with the Journal, but I’m feeling a strain in close and personal relationships.

I have a lot of Brooklyn-ish, upstate New York-ish friends, if you’re familiar with American culture, a lot of people of the left-leaning variety, and this has become a politicised issue on the left. And as a journalist, I’m not supposed to go into my own political leanings, but it's been a real strain there.

Adami: How did you start working with AI?

Lichtenberg: I was actually talking to a source just the other day, and he told me he’s pretty all-in on AI. But he started off as a hard ‘No’, and then came a little curiosity, and then fluency, when he had used enough different AI tools, and he said, frankly, some of them suck, and some of them are great, and some of them are great at certain things but suck at other things. But once he plugged himself into them properly, he said, ‘These are great and they are super helpful’.

And I had a similar trajectory. At first, I wasn’t a Luddite, but I thought, ‘These hallucinations really worry me’. At the time, you couldn’t click through in ChatGPT to see where it was pulling data from. That’s no longer the case. Once I could click through, I would play with it to see the sourcing underneath. As a journalist, I started seeing more utility there, and that’s been my journey.

Adami: How do you use AI on an average working day?

Lichtenberg: I’ve done long-form features for which AI helped me with transcripts and with outlining a structure of how I wanted to organise the information. I’ve had breaking news stories based on a press release about one of the biggest Fortune 500 companies. I’ve written stories on a general idea about something that I know to be happening, but I need help gathering all the sourcing together really quickly. There are other types of aggregation where I get a document, often a research note from an investment bank or a survey from Gallup or Deloitte or KPMG, and I can use AI to synthesise that information.

I have told a lot of friends, I’m a music guy. I really love classic rock, punk rock, alternative and grunge from the 1990s. And this reminds me of when the synthesiser started coming into classic rock around 1971 and the Who’s Next album. And then, 10 years later, Bruce Springsteen was using a ton of synthesiser, and it started to sound like a classic instrument, when at first it sounded really sci-fi and off-putting to a lot of people. And I think AI is doing a similar thing to writing.

This reminds me of when the synthesiser started coming into classic rock around 1971. Then, 10 years later, Bruce Springsteen was using a ton of synthesiser, and it started to sound like a classic instrument, when at first it sounded sci-fi and off-putting to a lot of people. AI is doing a similar thing to writing.

Simon: Have you ever noticed that AI systems shape your writing in a way that you don’t want them to? There's some research evidence that humans are sometimes too quick to accept what an AI system suggests to us in terms of writing, because we deem it qualitatively better, and that steers us away from what we would have actually said.

Lichtenberg: I try to be as disciplined as possible. My boss, Alyson [Shontell], told the Journal that most of these stories are at least 50% me, and you could look at that both ways: as a criticism or as a compliment. The friends I’m on less strained terms with are having some fun with this subject now, and I think the ‘50% me’ thing is a glass-half-full way to look at it: the AI is useful for gathering the quotes and stats and then all of the other stuff should be 50% me in terms of my voice, my journalistic interpretation of all of the raw material.

To me, using AI is a way to gather raw material really quickly. And that’s why I’ve been a little surprised by the reaction to this: because to me, it should be a journalist’s dream to have machines gather raw material for you quickly and synthesise information for you to check.

Adami: Was it your idea to use GenAI in your work?

Lichtenberg: It was a confluence of factors that were a bit out of my control. I was in between gigs last summer. I’d been in touch with Alyson throughout when I was at Fortune before. She said, “Here’s what we really need right now. Would you be up for this kind of challenge?” And some of the leadership experts I’ve talked to in my reporting have said, this is the model of successful AI adoption.

When I was working at Netflix, we had this phrase, ‘informed captain’. There's an informed captain on every issue in that company. I was made the informed captain of Fortune’s AI pilot, and I was given a sandbox to play in. I was given a lot of trust and freedom to experiment.

Surveys I’ve seen and thought leaders I’ve talked to, and experts in the Fortune 500 say that a lot of AI experiments are not like that. They often come from a top-down mandate: everyone has to start using AI and producing receipts about the work that they are doing with it, and the companies micromanage their adoption process. And that wasn’t my experience. I think this moment favors the sandbox approach.

Adami: Fortune relies on a paywall and you have subscribers. Do AI-assisted articles drive subscriptions?

Lichtenberg: I don’t want to get too much into our business model or trade secrets, but I would say it’s been working for me and for us. As to the reasons behind that, I would say audiences are mysterious things. I’m really proud of the fact that we are growing in areas where a lot of our industry is shrinking. I’ve been a working journalist for a long time now, and the industry has been in crisis that entire time. So we should have all options on the table to fight back this crisis.

Adami: Fortune’s also had layoffs last summer. Did the publication’s use of GenAI have anything to do with those? Could it prompt further layoffs?

Lichtenberg: I don’t want to get too much into that, because it’s not my space to talk about it. But it happened very soon after I came back. I think I was only back for three weeks when those layoffs happened. So it’s hard for me to see a connection, but it's not my place to comment.

Adami: What do you think the role of a journalist will be going forward?

Lichtenberg: One of the strange things about this moment for me is that I’ve become a character in the news cycle, and the journalist is not usually supposed to do that.

I have been a history nerd ever since I majored in history back at Syracuse University over 20 years ago, and I love the history of journalism, and I’m not saying my understanding is perfect, but people don’t always understand how constantly mutating the history of journalism has been.

I really love the ‘yellow journalism’ era when William Randolph Hearst and Joseph Pulitzer had their big newspaper war, and it was called the yellow journalism era because of a popular comic strip called the Yellow Kid. The Yellow Kid is what would sell the newspapers and fund all of the great reporting that was done, and it was so important that there was a bidding war for it.

And I also know about the days in New York of Pete Hamill and Jimmy Breslin. And there were copy boys back then, and rewrite desks. The act of putting together a news story and cobbling together a business model for journalism has always been a wild and wacky ride, and I would encourage people to dig into that and look at it closely. I don’t know where we are right now. Maybe we are at a moment of change or progress.

Simon: This idea that news is created by a single mind is not just ahistorical. It also doesn’t reflect the current practice. Journalism gets edited. In breaking news situations, you’ve got various people taking your copy and rewriting it in all kinds of ways, including with the help of various systems.

Lichtenberg: People have very strong ideas about what journalism is, and what it should be. When I worked at Bloomberg, I used what was then called Bloomberg Automation, and that transformed earnings reports and how they were reported to the stock market.

At Bloomberg there were people called headliners or speed-deskers whose job was to dive into the press release of the earnings report and find the earnings per share number as fast as possible. They could get that down to about five seconds, and then Bloomberg Automation got it down to a millisecond. And so those people’s jobs changed because of automation, to back-read the robot and find more information. I did that job for about a year and a half, and it changed how I looked at this stuff.

Readers want a human voice, and that pushes journalists like me to do more journalism. So I see it as a positive story. I see AI as this thing that pushes people out into the field to do more things, and it should make a lot of time-consuming aggregation less time consuming.

Simon: What do you think of the idea that some journalistic work can’t be automated away, such as identifying and cultivating a source?

Lichtenberg: Doing this over the last nine months or so has pushed me into more original reporting, because all algorithms and all AI products are necessarily backward-looking. They can only tell you about the data and the inputs that already exist and readers don’t want that.

Readers want a forward-looking angle. They want a human voice, and so that pushes journalists like me to do more journalism. So I see it as a positive story. I see AI as this thing that pushes people out into the field to do more things, and it should make a lot of time-consuming aggregation less time consuming.

Adami: There's a lot of discussion about AI being able to automate away lower level tasks, allowing journalists to focus on high-level thinking. But data from our recent report based on a survey of UK journalists suggests more frequent AI users are more likely to believe they work on low-level tasks too frequently. Has this been reflected in your experience?

Lichtenberg: It changes depending on the time of day and the kind of story I’m working on. Keeping a human in the loop is very important to me. I’m the last set of eyes, and the last human judgment on every single word that is in an article, and that means a lot of hyper-speed checking back and forth between different documents, making sure the numbers and quotes are exactly right. It’s second nature to me by this point.

That is just how human editors have to fact-check things. I’ve always been a high metabolism news editor, too. Before AI existed, I was editing dozens of stories a day and fact-checking hundreds of statistics a day. But there are times when my brain gets a little tired.

Adami: Some researchers are pointing to something called ‘AI brain fry’ – a kind of cognitive fatigue from multitasking and monitoring different AI outputs. Have you experienced something like that?

Lichtenberg: Yeah, definitely, I’ve had AI brain fry. But that’s also something that dates back many years in my work process. I enjoy a high octane, high metabolism experience of news.

Adami: Our research also found audiences are still cautious of AI use in journalism, and more so for front-end tasks like writing than for back-end tasks like spelling and grammar check. Have you heard from audience members about your use of GenAI?

Lichtenberg: I’ve got some aggressive, almost funny messages like ‘You're not even a real person, you're a robot’. Just funny insults like that. And some people also say, ‘Hey, this is really cool’. So the responses run the gamut, I would say, but some people seem really angry about it. But I also think people should look closely at this technology and what it enables people to do. Sometimes I think, if it wasn’t called ‘AI’, if it was called ‘mass information processing computer plugin’, then people would feel differently.

In every email we send you'll find original reporting, evidence-based insights, online seminars and readings curated from 100s of sources - all in 5 minutes.

- Twice a week

- More than 20,000 people receive it

- Unsubscribe any time