Advertising was always going to come for AI chatbots. The real question is how

Dominika Čupková & Archival Images of AI + AIxDESIGN / https://betterimagesofai.org / https://creativecommons.org/licenses/by/4.0/

Some truths are universal. Gravity pulls downwards. Water is wet. Human attention spans are limited. Another constant – I’d wager – is that as long as capitalism exists, someone will be trying to sell something to someone else. To do so, they need to make others aware of it and persuade them to part with their money. The solution, for better or worse, is advertising.

AI companies have now embraced this uncomfortable truth. On 16 January, OpenAI announced it would soon trial advertisements in free and low-tier versions of ChatGPT, after months of speculation that they would do exactly that. As of early February, these tests are now live. The likely reason is simple: artificial general intelligence remains distant, costs are staggering, and investors are impatient. Subscriptions are not enough. Most people do not pay for access to a chatbot, and many probably never will. Enterprise and government contracts help, but cannot plug the gap quickly enough.

Meanwhile, millions already use these systems to answer questions and seek recommendations, particularly younger users. That is an audience advertisers will pay handsomely to reach. And there is plenty of money involved in advertising. According to WPP Media, global ad revenue excluding U.S. political advertising will grow 8.8% in 2025 to $1.14 trillion.

The inevitable logic of “free” services

The tech industry’s journey to advertising has always been predictable, even if every generation pretends otherwise.

“We expect that advertising-funded search engines will be inherently biased towards the advertisers and away from the needs of the consumers,” declared not some critical tech scholar but Sergey Brin and Lawrence Page in an early paper. Today, their creation, Google, derives most of its revenue from ads. Reed Hastings of Netflix called advertising “exploitation” in 2020. Last year, Netflix’s ad revenue exceeded $1.5bn.

The logic is inescapable. Advertising may not be the nicest or most efficient market solution for getting others to buy, but it is the most widely adopted. Few places enable it more efficiently than online platforms that bring together buyers, sellers and consumers in one digital space. For tech companies, the temptation is irresistible: once you have captured users, you can monetise them at negligible marginal cost. The new AI entrants would succumb eventually, many reasoned. They have. So much for the “Why ads, why now?”

Banners or buried persuasion?

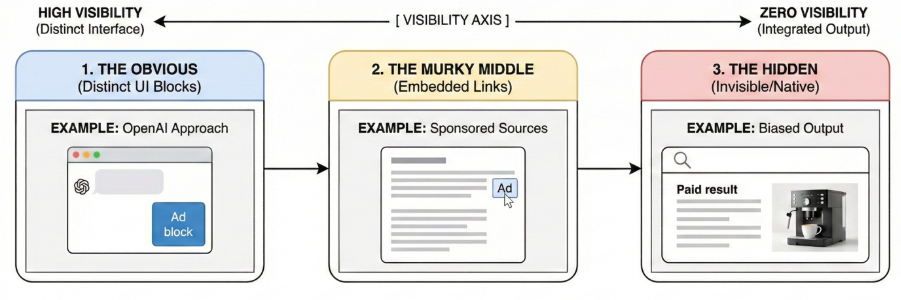

Now that we are at this fork in the road and know which way AI companies are going, comes the question of “What will this look like?” While the actual design will take many forms, I would argue we can think of possible formats as a spectrum from “obvious” to “hidden”, or from traditional banner ads to seamlessly embedded commercial content.

OpenAI’s proposed approach sits squarely at the “obvious” end: distinct advertising blocks within the chat window, similar to banner ads but tailored to users by “matching ads submitted by advertisers with the topic of your conversation, your past chats, and past interactions with ads”.

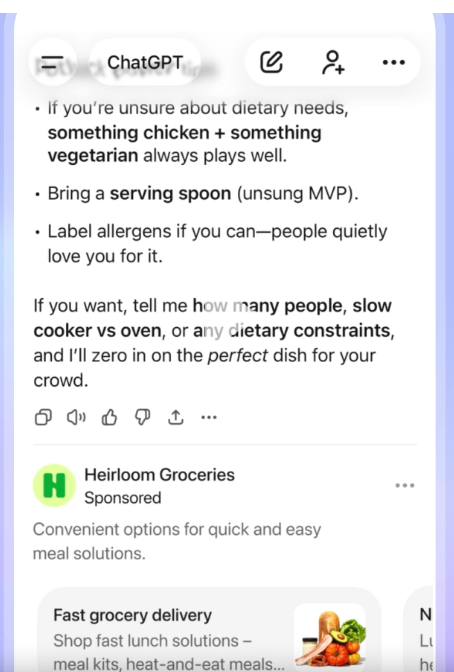

But one can just as easily imagine a migration of some companies towards the spectrum’s murkier middle: sponsored links nestled amongst cited sources, marked with a small “Ad” label that users may or may not notice.

At the extreme lies advertising that is functionally invisible because it is part of the output. Ask for the best coffee brand, and perhaps Lavazza would top the list – not because it is objectively superior, but because it pays. Or request an image of a modern kitchen, and a Lavazza machine appears on the counter instead of a generic one. This would be native advertising taken to its logical, troubling conclusion. (And no, Lavazza did not pay for this paragraph).

Granted, this latter scenario isn’t perhaps the most likely. Many countries already prohibit such “shadow advertising”, and for good reason. The backlash when users discover hidden commercial influence could be severe, inviting lawsuits and regulatory crackdowns.

Winners and losers

Which neatly leads us to the broader implications that we need to consider – and that AI companies might want to consider too.

The most serious questions concern the governance and fairness of ad inclusions. What products can be advertised? To whom? Should children see advertisements in their homework helper? What about vulnerable groups? Political advertising raises even thornier issues. These are not new problems – Google and Meta, for example, have grappled with them for years – but AI’s conversational intimacy could make them more acute. Content moderation, that perpetual headache, will extend to advertising moderation in AI systems, too. Granted, there is an ample supply of current and former employees of tech companies, plus a cadre of researchers, regulators and other advisers who can help navigate this maze. And it’s likely AI companies will need them. Regulators and citizens will not tolerate lax standards, and the battles will be fierce.

Then there is the question of what it will mean from a political economy perspective. Or to put it in simpler terms: Who will win, who will lose? It’s too early to say in some regards, but we can make some educated guesses. The winners are easy to identify. Incumbents like Google, Meta, and increasingly Amazon possess large user bases and sophisticated advertising infrastructure. OpenAI is clearly trying to build similar capabilities at remarkable speed and is nipping at their heels, but unseating the existing oligopoly remains unlikely in the near term. Over the longer run, the landscape may shift – though incumbents rarely cede ground easily. Granted, not all AI companies will follow this path. OpenAI courts consumers globally, so advertising makes strategic sense. Google’s model is similar. Anthropic, by contrast, focuses on enterprise clients and is unlikely to pursue consumer advertising as aggressively (at least for now). Some, like Perplexity, have rowed back from advertising entirely. If this will last, remains to be seen, too.

Another set of winners are advertisers who will surely welcome the development. Brands fret about how AI systems portray them, and advertising offers some control where they otherwise have little to none. Competition should also improve pricing, assuming the economists are correct on this one. Of course, they risk ending up losers in a different way if they end up being hoodwinked by the platform providers. Google, for example, is alleged to be no stranger to manipulating its ad market in its own favour (Google is appealing the decision). And whether ads in an AI system will work as well as promised is another matter. AI companies will claim that their systems will know users better and target them more precisely. Perhaps. But expecting massive leaps in performance would be naive.

A sure group of losers will be news publishers, who will face yet another indignity in their long disruption. But then again, their advertising revenue has already haemorrhaged to digital platforms. So perhaps for once they have to worry less in this context, because their slice of the advertising pie is already small. This is less about further losses than redistribution amongst the giants.

The biggest losers? Potentially, consumers, especially those who have gotten used to ad-free and free chatbots. Privacy risks loom. And the starting gun on just how intrusive such ads could be has only just been fired. AI’s personalised, conversational nature could make advertising feel unbearably invasive, depending on how ads are implemented. The very quality that attracts advertisers could end up repelling users.

Where to from here?

It’s too early to say exactly how things will pan out. So what, if anything, should be done now? If we leave aside the question of whether advertising is generally a morally defensible activity, the answer largely comes down to another matrix. How effective is this new form of advertising, and for whom? Is it political or not? Is it overt or not? Who will get to see it, and when and where? Can you opt out? And who will stand to gain the most?

So much is clear: the people using these systems deserve protection. Advertising should be clearly labelled, not hidden. Children should be shielded from commercial manipulation. Political advertising requires stringent transparency. And users should be able to opt out meaningfully, not through buried settings.

These are not novel demands. They are the same problems digital platforms have faced, and too often dodged, for two decades. A broader lesson is that business models shape behaviour, and advertising shapes incentives in particular ways. Systems optimised for advertising revenue could easily end up prioritising commercial outcomes over user welfare when the two conflict. Regulators, tech companies and users should proceed with eyes open.

And so the road to AGI, it turns out, runs through the same commercial pressures that have shaped every previous technology platform. There is nothing inevitable about the specific choices AI companies make. Whether the end goal can and will be thus achieved is an open and contested question, with many unknowns – but we have good mental models for what advertising can do. Proceed with caution.

This article originally appeared on NIKKEI Digital Governance. It represents the views of the author, not the views of the Reuters Institute.

In every email we send you'll find original reporting, evidence-based insights, online seminars and readings curated from 100s of sources - all in 5 minutes.

- Twice a week

- More than 20,000 people receive it

- Unsubscribe any time